Cite as: Stefanescu, D., 2020. Alternate Means of Digital Design Communication (PhD Thesis). UCL, London.

The research presented in this thesis was undertaken within a collaborative network of academic and industrial partners. One of the main goals of this network, InnoChain, was to leverage the potential synergies between theoretical research, embedded within the academic partners, and practical concerns coming from practice. Consequently, the research process that underpins the theoretical investigations into digital design communication presented here has an important applied, or practical, component that was in constant dialogue with the conceptual aspects of this project.

3.1 Living Laboratory

From a methodological point of view, this research project has relied on the methods and strategies incorporated by a living laboratory. The concept of a living laboratory is attributed to William Mitchell, the dean of the School of Architecture and Planning from MIT, and it evolved out of the HCI and User Experience (UX) fields (Almirall and Wareham, 2011; Guzmán et al., 2013; Kusiak, 2007). Originally, it was used by Mitchell to study how users would interact with new technology artefacts starting to permeate buildings. Veli Pekka Niitamo described it as “a research methodology for sensing, prototyping, validating and refining complex solutions in multiple and evolving real-life contexts” (Almirall and Wareham, 2011).

More recent definitions of the term describe it as a “user centred innovation ecosystem integrating research and innovation” (Pallot, 2012). In a comparison with concurrent engineering, Guzmán et al. refer to living labs as research-based “infrastructure within which software companies and research organisations collaborate with lead users and early adopters to […] define, design, develop, and validate new products or services“ (Guzmán et al., 2013). Across literature, there are two recurring methodological directives associated with a living lab: first, involve users as early as possible in the research process, and, second, experiment in real world settings with tangible artefacts (Guzmán et al., 2013; Pallot, 2012). The reasoning behind the former is that, by engaging all stakeholders from the beginning of the research process, emerging scenarios, usages and behaviours can be discovered, and help shape and validate the research problem. The latter stresses the importance of doing “research in the wild”, as opposed to within a laboratory, controlled environment using real, functioning products, rather than virtual mock-ups. The key difference between a prototype and a product (which, nevertheless, could be at a prototypical stage) is that a prototype, by itself, can only be used to simulate the accomplishment of a given task and thus implies a safe zone in which risk is eliminated. A working product, nevertheless, does not eliminate the risk involved in solving a specific assignment, and thus enables a more realistic assessment. Simultaneously, it will allow for the discovery and investigation of aspects of the research problem which would otherwise have remained invisible in a low-risk, “staged” setting.

Within the field of HCI, there is a large body of studies analysing the affordances and disadvantages of moving research out of a controlled environment. One key advantage is that an “in-situ study is likely to reveal more of the kinds of problems and behaviours people will have and adopt”, thus gaining “ecological validity” (Rogers et al., 2017). Naturally, this comes with a potential loss of control over the experiments being performed, as there is no predefined set of tasks users must perform, and no control predictions over the assumed set of interactions (Rogers et al., 2017).

Nevertheless, the greater ecological validity of in the wild research, staged within a living laboratory context, is a needed complement to the current state of the art in digital design communication, as there is a growing divide between research and applications in BIM and “the small practitioner” (Dainty et al., 2017). The mismatch between the needs of the industry “on the ground” and the official policy mandated by national governments or large advisory bodies is amplified by the technological cost of implementing them. Consequently, this results in what Dainty calls a feeling of disenfranchisement across the AEC industry (Dainty et al., 2017). Notwithstanding, the problem of technological adoption is further reinforced by both the AEC industry’s resistance to change as well as the legacy software monopolies controlling the tooling required, as well as the cost of upskilling amongst the workforce (Charef et al., 2019; Hong et al., 2019; Barbosa et al., 2017).

While these problems can be seen and addressed individually, taken as a whole, they are symptoms of the wicked problem defining AEC’s relationship with technology. As mentioned earlier in the literature review chapter, the historical study undertaken by the BICRP in the 1970s, recommended in its final report the inclusion of sociological and organisational factors in the modernisation effort of the construction industry because of the continuous observed differences between industry-mandated formal approaches and the informal methodologies that appeared in response (Wild, 2004). Furthermore, this problem was recently resurfaced by Koch et. al. in 2019, who argue that studies in the field reveal “a piecemeal adoption of BIM and Lean” methodologies, and a general “overrating of the impact of productivity”, noting that, for example, an unresearched question is the “controlling and disciplining effect on site managers feeling forced to follow standard recipes […]” (Koch et al., 2019).

Consequently, one may claim that the state of software and its usage within AEC contrasts with today’s environment, where software is being spread “virally”, and users become committed to the digital applications they use. Specifically aiming to counter this trend, the research principles of a living laboratory – namely, involve all stakeholders as early as possible, and experiment in real world settings – are thus an ideal methodological framework to help concurrently define and analyse the problems in the field of digital design communication in a way that does not exclude persons that are not usually involved in the research process.

3.2 Tackling a Wicked Problem

While, on an abstract level, it is safe to assume that the issue of digital design communication is a wicked problem that involves simultaneously technological, social and political issues, the work presented in this thesis is aimed at answering the three specific research questions outlined in the introduction (composable vs. complete object model, object-centred vs. file-based data classification, object-centred vs. file-based data transaction). This was achieved through a mixture of reinforcing theoretical, technical and applied analyses that were enabled by the software developed as instrumentation, named Speckle. The development of Speckle is an act of design, albeit technical, and, therefore, “wicked” and was performed within the context of a living laboratory.

It is important to note that, given a wicked problem, there is no singular “objective” resolution thereof (Rittel and Webber, 1973; Weber and Khademian, 2008). This introduces a certain bias in the analyses associated with the three research questions. To clarify, this research project does understand that technology can be seen as an application of means to achieve ends (Heidegger, 1978). Nevertheless, this view ignores the contemporary dynamic in which, to a certain extent, the digital medium has a certain agency of its own. In other words, the “ends”, or goals, are also fungible and malleable (Blitz, 2014). Therefore, when considering the software developed as research instrumentation, it is important to note that it as an active, rather than subservient or passive, actor that both serves and shapes that which it was originally designed to enable: in the present case, digital design communication.

This translates into the caveat that the problem is dependent on its solution; consequently, the research presented can be seen as biased and dependent on the way it was approached. The affordances of new digital technologies, such as internet and its communicative potential, exert a certain “push” which, when matched with the industry’s “pull”, gives rise to innovation (Pallot, 2012). Subsequently, the aim of the research questions is to identify the relevant connections between the two. This research project attempts to abstract away potential inconsistencies; the conclusions presented should be normatively sound, and their validity should be verifiable outside the specific technical implementation of the research.

3.3 Speckle: Research Instrumentation & Industry

During the course of this research project, a digital communication software platform was developed in order to serve as the vehicle for testing theoretical assumptions in the real-life context provided by the industry partners. This research instrumentation platform, named Speckle, evolved alongside input from the InnoChain industry partner network, as well as from the open source community that grew around it.

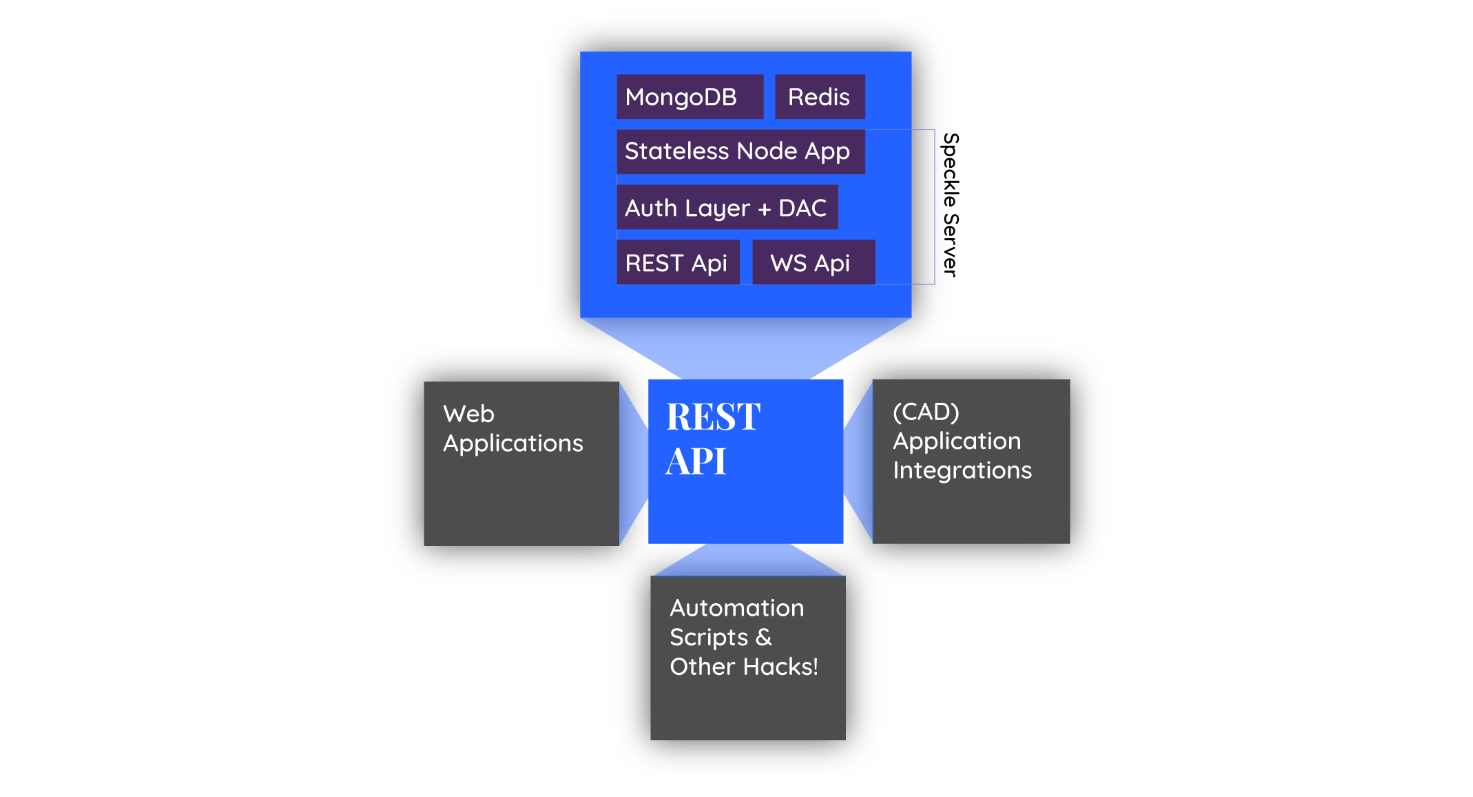

The main goal of the Speckle platform is to enable stakeholders to send to each other design data from some of the most commonly used authoring applications in AEC. Succinctly put, it consists of a server application (the backend) that orchestrates the storage, classification and transaction of said design elements, and a host of client applications that integrate with CAD software packages and allow for the extraction of design information from within. All the interactions between the client applications and the server happen through a REST API. The client applications themselves are not restricted to CAD software connectors: they include stand-alone desktop tools developed by others, as well as web applications (SPAs/PWAs), WebGL 3D viewers, etc. (Figure 7).

The content, form and architecture of the core Speckle ecosystem evolved throughout the duration of the project—and is as such documented in Appendix 1, Tooling History. Most importantly, it underwent three complete re-writes in quick succession that encompassed large technical changes and shifts of focus, which reflected input from the industry, but as well due to (software) architectural dead-ends (i.e., the system would not scale to the demand of real-life projects). The analyses presented going forward do not reflect on this evolution, and rely solely on the last version of Speckle, namely 1.x.x. Currently, the codebase for the core platform components stands at approximatively 54,834 lines of code[8]. Taking into account the wider ecosystem of the platform, contributed to by the community members, it amounts to approximatively 65,390 lines of code.

Throughout the project, Speckle was developed along the methodological lines of a living laboratory. Specifically:

- It was developed openly in a collaborative environment (Github), as well as it was released under a liberal open source license (MIT). This allowed the project to build the trust of stakeholders, as they would not be contributing to a project that they do not own. Subsequently, this resulted in continuous input from the technically-minded audience members. While this introduced an extra management overhead, the benefits of this approach were that the original audience expanded beyond the original InnoChain industry network, thus gaining a richer ecosystem within which the project’s research agenda could be pursued.

- Because deploying the Speckle server in the cloud represented a big technical barrier for the intended audience, it was offered as a free service solely for experimental and testing purposes. The server’s name is Hestia[9]. By eliminating this adoption barrier, the friction in getting input from the stakeholders involved was greatly reduced. It also had the benefit of allowing non-technical persons to contribute. Lastly, it allowed for “in the wild observations” that otherwise would have been impossible.

- During late 2017 and mid 2018, as part of this project, three focus group meetings (workshops) were organised. They played a key role in validating both the technical development path of Speckle, as well as the theoretical research outcomes embodied by it. These workshops involved participants directly from the project’s research network, but were as well open to external stakeholders, forming a wide cross-section of the AEC industry (Bryman, 2012). While the face to face discussions with industry experts helped sharpen and clarify the research and development direction, the involvement of a diverse group of end-users helped identify problems from “on the ground”; the complementary mix of roles of the focus group meeting’s participants was a key factor in revealing a full picture of the research issues addressed. The reports and minutes of these focus group meetings are presented in Appendix B, Industry Exchanges.

- Finally, during the three year duration of this project, approximatively eight months were spent undergoing secondments within HENN, a large German architectural design company, and McNeel Europe, the software developers behind Rhinoceros 3D and Grasshopper, a widely used CAD application. The secondments with HENN were key in collecting requirements regarding early stage digital design communication requirements, as well as identifying key points of friction in their day-to-day design process. Furthermore, HENN served as the earliest Speckle test-bed for end users. McNeel Europe put resources forward to help with development tasks as well as end-user outreach. Furthermore, they provided invaluable input from the point of view of a developer and tool-maker, and identified key integration requirements that helped structure the shared code between software applications and Speckle.

This research instrumentation platform, Speckle, was used to test, validate and analyse alternative means of digital design communication against existing practices that are currently available to the industry. It was invaluable in collecting data necessary to answer the three research questions, both qualitative and quantitative, forming the backbone for a mixed-method approach (Bryman et al., 2008). Specifically:

- in regards with the first research question pertaining to data representation, Speckle was used to analyse the impact of how objects from existing established schemas and end-user defined ontologies are represented and used in communicative exchanges, and how they can be managed at scale; essentially, this allowed an examination of digital data representation through the interrogation of empirical data;

- in regards with the question on data classification, “live” data from Speckle[10] was used to empirically compare the file-based strategy with an object-based one, assess the amount of sub-classifications a model is broken down into, as well as extract qualitative case studies supporting the research;

- lastly, in the analysis on data transaction, it was used to quantify the impact of differential (delta) updates as opposed to file-based approaches, investigate the limits thereof, as well as assess the affordances and limitations emerging from real-life usage.

By virtue of being a tangible artefact, Speckle was (and is) employed on real-life projects within the larger industry network. It thus complemented theoretical tests with the pressures of actual production use, which revealed various aspects of digital communication that informed the analyses herein. Furthermore, by developing it under an open source license, and within reach of a technical audience’s continuous scrutiny, it was able to greatly expand the size, and hence the relevancy, of the ecosystem in which the research questions have been analysed. Summing up, the methodology followed throughout this research project leveraged the academic and industrial network offered by InnoChain, and it emphasised early and intensive interactions with the stakeholders involved. In order to properly articulate a living laboratory setting, the theoretical research was complemented by a strong technical development component, which helped provide a tangible petri dish for the analyses undertaken to answer the research questions.

Footnotes

[8] As measured using the cloc command line utility, excluding metadata files and any external libraries.

[9] Hestia is the ancient Greek goddess of the hearth, home and dwellings. The server can be accessed at https://hestia.speckle.works/api (accessed 12th October 2019).

[10] Specifically, data was mined from the free test-server mentioned above, Hestia.